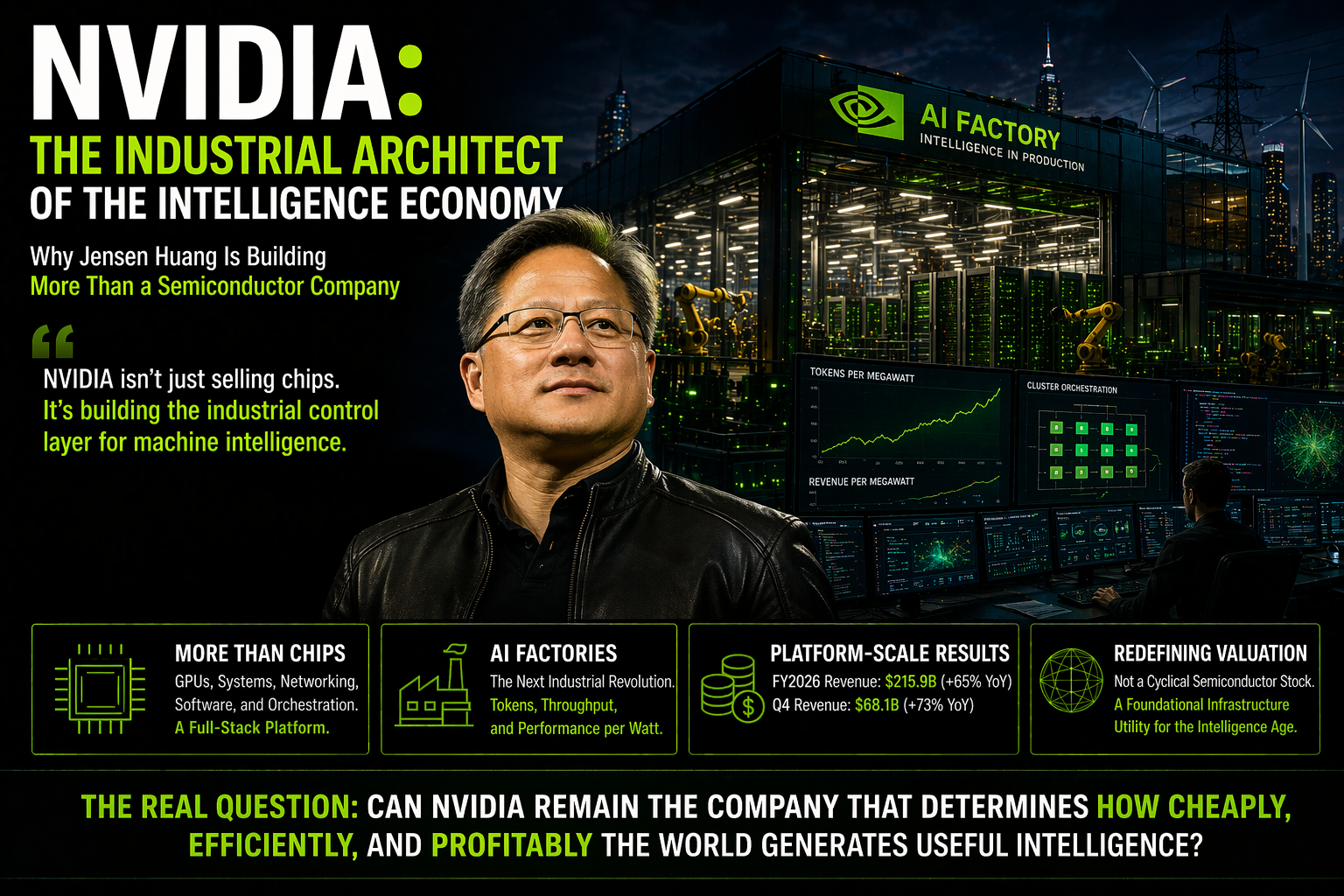

NVIDIA: The Industrial Architect of the Intelligence Economy

The easiest way to misunderstand NVIDIA in 2026 is to call it a chip company. That description is technically correct — and strategically incomplete. NVIDIA is no longer really selling GPUs. It's selling the industrial architecture for producing machine intelligence at scale. Why Jensen Huang is building the operating layer of the intelligence age, and why traditional semiconductor valuation frameworks are the wrong map for what's actually happening.

# NVIDIA: The Industrial Architect of the Intelligence Economy

Why Jensen Huang Is Building More Than a Semiconductor Company

The easiest way to misunderstand NVIDIA in 2026 is to call it a chip company.

That description is technically correct.

It is also strategically too small.

NVIDIA still designs and sells semiconductors. But the company's real ambition is much larger: it is trying to become the industrial control layer for the production of machine intelligence. That is why Jensen Huang increasingly talks about AI factories, token economics, and performance per watt — not just faster GPUs. NVIDIA's own technical materials now explicitly frame the future in terms of maximizing token throughput and revenue per megawatt across the AI stack.

This distinction matters for investors because old valuation frameworks were built for old business models. A traditional semiconductor company sells components into somebody else's value chain. NVIDIA is increasingly trying to shape the value chain itself — across chips, systems, interconnects, networking, software, orchestration, and inference economics.

That helps explain why fiscal 2026 revenue reached $215.9 billion, up 65% year over year, with fourth-quarter revenue of $68.1 billion, up 73% year over year.

Those are not just strong numbers.

They are platform-scale numbers.

Our core view is simple:

> NVIDIA should now be analyzed less like a cyclical semiconductor stock and more like a foundational infrastructure utility for the intelligence age.

The real investor question is no longer, "How many GPUs can NVIDIA still sell?"

It is, "Can NVIDIA remain the company that determines how cheaply, efficiently, and profitably the world can generate useful intelligence?"

If the answer remains yes, the valuation debate changes materially.

1. Jensen Huang's Real Bet: AI Computing Is Compounding Faster Than the Old Semiconductor Playbook Can Explain

One of the most important pieces of context in the NVIDIA story is Jensen Huang's belief that traditional semiconductor intuition is no longer enough.

Secondary reporting on Huang's recent remarks summarized his view that effective GPU computation has risen by roughly a million-fold over the past decade — driven not only by transistor density, but by architecture, systems design, and software. Whether investors focus on the exact phrasing or not, the broader point is consistent with NVIDIA's strategy: value is now being created through the coordinated compounding of hardware, networking, memory movement, inference optimization, and software.

Not through silicon scaling alone.

That is why NVIDIA's roadmap matters so much. This is no longer a simple rhythm of launching a better GPU every few years. At CES 2026, NVIDIA formally launched the Rubin platform, describing it as six tightly integrated chips forming one AI supercomputer, designed to build, deploy, and secure the world's largest AI systems at lower cost.

Rubin is not being marketed as a part.

It is being marketed as a system-level standard for the next phase of AI infrastructure.

This is the key shift.

NVIDIA is no longer really selling speed in isolation. It is selling a future rate of intelligence production that competitors will struggle to match across the whole stack.

That is a much stronger business than the phrase "chipmaker" implies.

2. Jensen Huang's Leadership Is Best Understood as Industrial First-Principles Thinking

To understand NVIDIA, investors have to understand Jensen Huang's style — not as personality branding, but as operating discipline.

The most useful American analogy is not a consumer company extending a successful product line. It is closer to a company trying to become the electric grid of a new industrial age.

In the early 20th century, what mattered was who could generate and distribute the cheapest reliable power. In the AI economy, what may matter just as much is who can produce and route the cheapest reliable intelligence.

That is the frame Huang is building toward.

NVIDIA's recent technical communications make clear that the company thinks in terms of hard physical constraints: power, cooling, bandwidth, interconnect, latency, rack density, and inference efficiency. Its engineering story is not "make the next chip faster." It is "optimize the entire AI production function."

NVIDIA's own developer materials now explicitly tie higher performance per watt to higher token throughput and higher revenue within a fixed power envelope.

That is also why Huang remains unusually difficult to classify.

He does not sound like the CEO of a mature hardware company protecting a franchise. He sounds like the operator of an industrial buildout. Recent reporting also showed him still leaning into an underdog mentality, arguing that NVIDIA does not assume it can choose all future winners because it remembers what it was like to be one startup among many.

That mindset matters because it helps explain why the company keeps extending outward rather than defending a single product category.

3. The Valuation Problem Is Really a Business-Model Problem

The most striking thing about NVIDIA is not simply that it trades at a premium.

It is that conventional valuation language often fails to capture why the premium persists.

The deeper intuition is valid: NVIDIA's profit structure is extraordinary. For fiscal 2026, the company generated $88.9 billion in GAAP net income on $215.9 billion in revenue, while returning $41.1 billion to shareholders through buybacks and dividends during the fiscal year.

That is an unusual combination of scale, growth, and profitability — especially for a company still widely categorized as hardware.

That is why NVIDIA keeps breaking the old mental models.

Traditional semiconductor companies usually face:

- Margin pressure

- Hardware commoditization

- Weak software lock-in

NVIDIA has delayed and diluted those forces through CUDA, networking, rack-scale system design, inference software, and AI-factory orchestration. The company has not eliminated competition. But it has made competition much harder than "build a faster chip."

Put differently: the valuation only looks impossible if one insists on analyzing NVIDIA as though it were in the same category as legacy semiconductor vendors.

If investors instead treat it as the company trying to sell the compute stack, logistics stack, software stack, and operating model for the intelligence economy, then the premium becomes easier to understand — even if it still requires discipline and close monitoring.

4. From GPU Company to AI Factory Company

This is the conceptual center of the NVIDIA story.

At GTC 2026, NVIDIA and its ecosystem increasingly framed data centers as AI factories — facilities designed not just to host compute, but to continuously produce tokenized machine intelligence at industrial scale.

Independent coverage from Data Center Frontier described this as a shift from the training era to the inference-and-agents era, where data centers evolve into revenue-generating factories optimized for persistent AI output. NVIDIA's own blog uses the same framing and connects infrastructure efficiency directly to token throughput and revenue per megawatt.

This is where the token economy idea becomes important.

In the AI era, the relevant unit of work increasingly becomes the token. Every prompt, code completion, reasoning trace, retrieval step, and agent action resolves into tokens processed through infrastructure.

NVIDIA is positioning itself as the company that helps customers produce those tokens more cheaply, more quickly, and with better energy economics. Its own materials say that Blackwell-based deployments have reduced cost per token by up to 10x for some leading inference providers compared with Hopper.

That is why NVIDIA increasingly looks less like a part supplier and more like an industrial utility of intelligence production.

The best American metaphor is not a product company.

It is a company that becomes the power plant and grid operator for a new class of economic output.

5. The Token Economy Is Not a Slogan. It Is an Emerging Business Model.

One reason this framing matters is that NVIDIA is not the only company now talking this way.

Microsoft CEO Satya Nadella has publicly described the future in terms of token factories and tied AI-era productivity to token output relative to energy and infrastructure usage.

That matters because it shows one of NVIDIA's largest ecosystem partners is already thinking of intelligence as an industrial output — not just a software feature.

This is the deeper investment point:

> NVIDIA is not merely benefiting from enthusiasm around AI. It is helping define the economic logic of AI itself.

Once AI usage shifts from novelty to production, the right questions become:

- How much does each useful token cost?

- How much revenue can each megawatt support?

- How much throughput can each rack deliver?

- How much of the software and systems stack belongs to the same vendor?

Those are much more durable questions than benchmark scores or short-lived consumer excitement.

NVIDIA is trying to own the answers to those questions.

6. Why the Stock Cooled: NVIDIA Created the Market, Then the Supply Chain Was Repriced

The idea that NVIDIA "shared" some of its gains with the supply chain is directionally right.

As AI infrastructure spending broadened, investors began repricing memory, advanced packaging, networking, power, and rack suppliers that had been undervalued relative to NVIDIA itself. That is a normal market response when one company's industrial buildout becomes large enough to create bottlenecks elsewhere.

But it would be a mistake to interpret that as NVIDIA losing control of the value story.

NVIDIA has responded by extending its grip upward into the software and operating layer. The launch of Dynamo 1.0, which NVIDIA describes as the distributed operating system of AI factories, is a strong example. The company is trying to ensure that even as hardware becomes more widely distributed across the supply chain, the orchestration layer remains NVIDIA-defined.

That is a very different dynamic from a company simply handing profit away.

It is closer to a platform leader allowing suppliers to benefit from scale while keeping control of the core operating standard.

7. The Next Battlefield Is Inference Economics, Not Just Training Scale

Investors still tend to think the AI cycle is mainly about training giant models.

NVIDIA is already moving the conversation beyond that.

Blackwell and Rubin are being framed not just as faster training systems, but as infrastructure optimized for agentic inference, large-scale serving, and lower token cost. NVIDIA's recent blog says Blackwell-based production environments have helped major inference providers reduce token cost by 4x to 10x, depending on workload and model type.

Rubin is being positioned as the next leap, with system-level designs meant to drive down training time and inference token cost even further.

This matters because the real long-term economics of AI will not be set by training one breakthrough model.

They will be set by the recurring cost of running trillions of model calls, agents, copilots, software workflows, and enterprise reasoning tasks.

NVIDIA's current strategy is built around staying central to that recurring layer.

That is a much larger opportunity than a one-time capex boom.

8. What U.S. Investors Should Actually Watch

If investors want to analyze NVIDIA correctly from here, they should focus less on whether the stock "looks expensive" and more on whether the company continues to win the industrial logic of the intelligence economy.

Four things to watch most closely:

First, whether demand for AI factories remains broad across hyperscalers, sovereign AI programs, frontier labs, and enterprises. NVIDIA's own commentary around fiscal 2026 results said customers are still racing to invest in AI compute and described those systems as the factories powering the AI industrial revolution.

Second, whether NVIDIA keeps improving cost per token and performance per watt faster than competitors can close the gap. That is the real battlefield in the token economy.

Third, whether the company continues to hold the orchestration and software layer — not just the hardware layer. CUDA, networking, AI-factory software, and rack-scale design are what turn product leadership into system leadership.

Fourth, whether AI applications keep turning into real economic workloads rather than staying in the pilot phase. If AI becomes a recurring production expense tied to real revenue or real productivity, NVIDIA's position becomes more durable because it is pulled by customer economics rather than just sentiment.

9. The Risk Case — What Could Break the Thesis

A strong report has to respect the counterargument.

Risk 1: Hyperscaler internalization

AWS (Trainium/Inferentia), Google (TPU), and Microsoft (Maia) are all building internal chips. If hyperscaler workloads shift meaningfully to in-house silicon, NVIDIA's growth vector narrows.

Risk 2: Inference cost compression

As inference efficiency improves across the industry, per-token revenue capture may decline faster than volume growth compensates.

Risk 3: Geopolitical and export constraints

Continued restrictions on advanced chip exports to China could structurally shrink addressable market.

Risk 4: Valuation multiple compression

Even if fundamentals remain strong, multiple compression in a broader market reset could produce meaningful drawdowns regardless of operating performance.

None of these risks are disqualifying.

All of them are worth monitoring.

10. Investment Conclusion

The strongest version of the NVIDIA bull case is no longer that it sells the best chips in the hottest category.

That story is already too small.

The stronger version is this:

> NVIDIA is building the industrial architecture for producing intelligence at scale.

In the industrial age, the great winners controlled energy, transport, or communications.

In the software age, the great winners controlled operating systems, search, and cloud.

In the intelligence age, the great winners may be the companies that control how cheaply, efficiently, and profitably the world can produce tokens.

NVIDIA is the clearest candidate for that position today.

That does not mean the stock cannot correct.

It does not mean competition vanishes.

It does not mean valuation risk is irrelevant.

It means that investors who continue to analyze NVIDIA as if it were just another semiconductor company are probably using the wrong map.

Final Line

NVIDIA is no longer best understood as a chipmaker.

It is becoming the industrial architect of the token economy — and that may prove to be one of the most valuable positions in modern markets.

This Special Report is part of Brutal Edge's "Intelligence Economy" series, examining how machine intelligence is being industrialized across Big Tech. Related analysis: The Rise of Claude (Anthropic), The Token Economy (Amazon/MS/Google), and The Final Frontier (M7 AGI Map).

Share your analysis

Keep it data-driven. No investment advice.

- Keep it data-driven and respectful

- No investment advice (buy / sell / hold)

- No spam, promotion, or solicitation

- No profanity or offensive content

- Violations are automatically removed

Data: Financial Modeling Prep, Alpha Vantage, CoinGecko

NOT investment advice. Always do your own research.