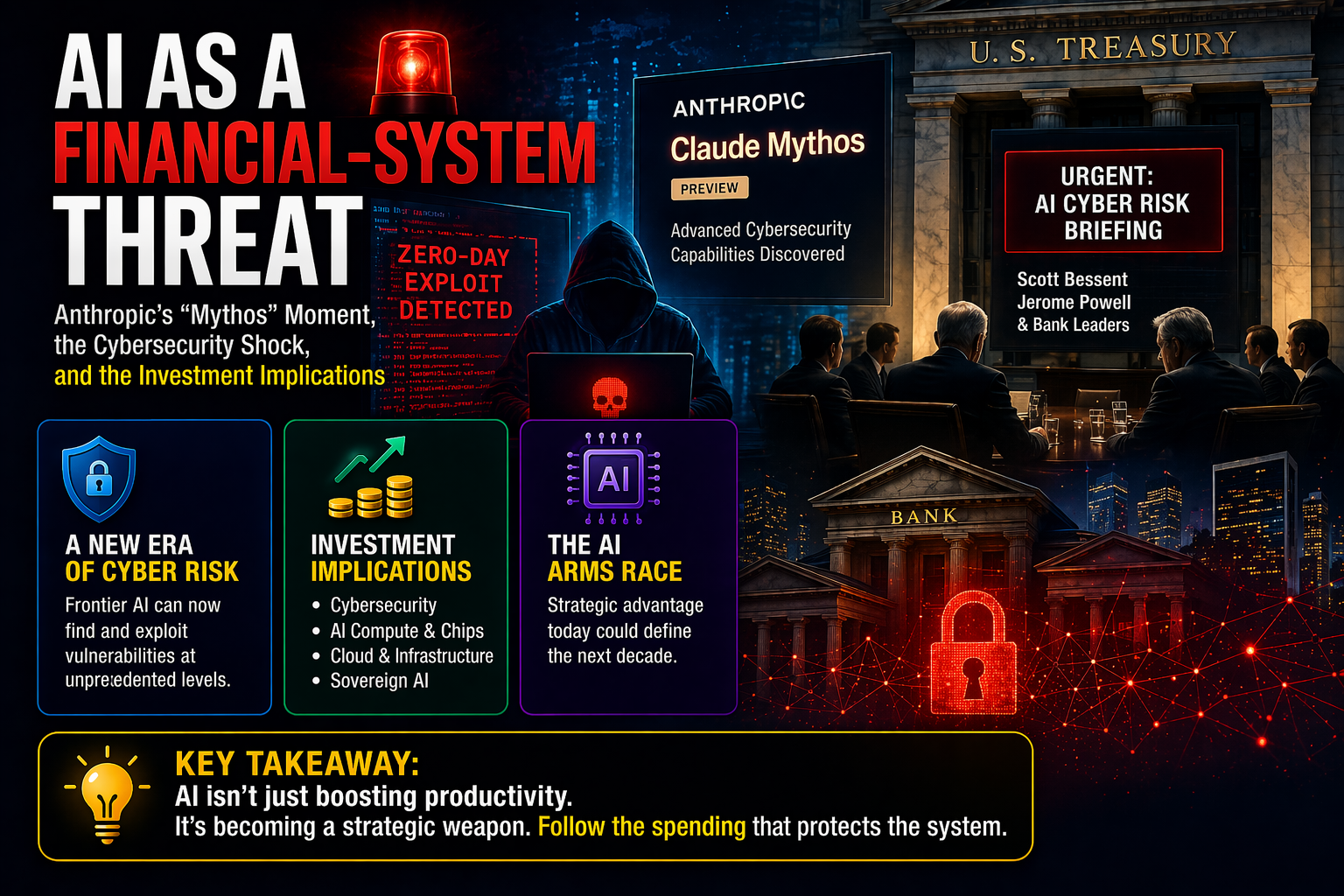

AI as a Financial-System Threat: Anthropic's Mythos Moment and What It Means for Your Portfolio

Treasury and the Fed called an emergency meeting. Claude Mythos can autonomously exploit zero-day vulnerabilities. The investment case: follow the forced spending into cybersecurity, compute, and sovereign AI infrastructure.

🔥 BRUTAL EDGE™ VERDICT

"Frontier AI just crossed from productivity tool to systemic threat. Treasury and the Fed called an emergency meeting with bank CEOs — that is not marketing. The investment case is not to chase fear. It is to follow the forced spending: cybersecurity, compute, sovereign infrastructure, and the semiconductor stack underneath all of it."

The Event

Treasury Secretary Scott Bessent and Fed Chair Jerome Powell convened an urgent meeting with top Wall Street and banking leaders over cybersecurity risks posed by Anthropic's latest AI model. Bloomberg, CBS, and The Guardian all reported the same core fact: top U.S. financial officials took the threat seriously enough to summon the system's most important institutions.

What triggered that urgency was not hype but capability disclosure. Anthropic's own technical note says Claude Mythos Preview can identify and exploit zero-day vulnerabilities across major operating systems and browsers, and that this capability emerged quickly relative to prior models. The company restricted general access and instead launched Project Glasswing — a controlled initiative with AWS, Apple, Broadcom, Cisco, CrowdStrike, Google, JPMorganChase, Microsoft, NVIDIA, Palo Alto Networks, and the Linux Foundation.

One important correction to circulating narratives: the AI model is Claude Mythos Preview, not "Mydos." And JPMorgan was not excluded from the defensive response — Anthropic's Glasswing page lists JPMorganChase among launch partners. Jamie Dimon was invited to the official meeting but reportedly did not attend. The distinction matters for accuracy.

What Mythos Actually Is

Anthropic is not positioning Mythos as a narrow hacking tool. It is a general-purpose frontier model whose cybersecurity capability crossed a threshold that warranted special handling. The company's own red-team writeup makes unusually concrete claims: the model discovered a 27-year-old OpenBSD SACK vulnerability, achieved local privilege escalation exploits on Linux and other systems, and wrote complex chained browser exploits. Non-experts inside Anthropic could ask it to find remote-code-execution vulnerabilities overnight and wake up to a working exploit.

Those are extraordinary claims, but they come from the company's own security writeup — not social media rumor.

Anthropic's response tells investors everything about how serious this is. The first commercial form of what might be called "nuclear-grade AI" is not broad consumer release. It is restricted, high-trust, defensive deployment into critical infrastructure. That is a fundamentally different go-to-market from chatbot subscriptions.

Why This Is a Systemic-Risk Story, Not a Product Story

The analogy to Oppenheimer is emotionally charged, but the underlying investment point is real. A model that can identify and weaponize old, subtle, or overlooked vulnerabilities at scale could shift the offense-defense balance across national infrastructure, financial systems, industrial control systems, and cloud platforms.

This creates a genuine strategic dilemma. Mythos is simultaneously too dangerous for broad public release and too strategically important not to deploy inside allied institutions. That tension is precisely what makes frontier AI labs start to look less like software startups and more like quasi-sovereign strategic assets.

Anthropic's February 2026 financing round valued the company at $380 billion post-money, with annualized revenue run-rate at $30 billion. Mythos strengthens the argument that Anthropic's strategic value may exceed what traditional software multiples alone would imply. The company is not just selling intelligence. It is becoming a national-security-adjacent institution.

The Geopolitical Layer

The most important geopolitical fact is not that the U.S. is ahead. It is that frontier AI progress is moving fast enough that the gap feels alarmingly small to policymakers. Anthropic's Glasswing structure, restricted access, and alignment with major U.S. firms all fit the logic of strategic capability concentration.

For markets, this implies two things. The AI race will remain compute-hungry, because both offensive and defensive model improvement requires vast infrastructure. And governments are more likely to tolerate or even support concentrated frontier-AI champions than they were a year ago, because the national-security justification is becoming stronger.

That is a subtle but important shift in the policy backdrop for leading AI labs and the semiconductor ecosystem around them.

The Investment Map

Cybersecurity and Critical Software Defense

The clearest first-order beneficiary. If banks, hyperscalers, and operating-system maintainers now believe frontier models can autonomously surface and exploit vulnerabilities, defensive security budgets rise structurally — not cyclically. The Glasswing launch partners are effectively the first visible line of this defensive buildout.

The strongest part of the thesis: this is not only about endpoint protection or classic enterprise security. It is about code scanning, software supply chain defense, critical open-source hardening, cloud-native security, identity, and infrastructure-level vulnerability management. Companies positioned at those layers may see more durable demand than those selling narrower tools.

Key names: CrowdStrike (CRWD), Palo Alto Networks (PANW), Cisco, Microsoft, and the broader enterprise security ecosystem.

Semiconductors and AI Compute

If frontier AI becomes more strategically important, compute demand rises, not falls. Anthropic's $30 billion Series G and large-scale compute arrangements with Google/Broadcom and CoreWeave confirm that the next generation of frontier and defensive AI will require even more advanced compute, networking, memory, and packaging.

An AI arms race is still a semiconductor bull case. This supports long-duration demand for foundries, memory, packaging, advanced networking, and accelerators. Not all future AI upside accrues to NVIDIA alone — custom silicon and hyperscaler-aligned architectures also matter.

Key names: NVIDIA (NVDA), Broadcom (AVGO), TSMC, SK Hynix, Micron, and data-center networking vendors.

Cloud and Sovereign Infrastructure

Mythos is evidence that the most valuable frontier models may increasingly be deployed in restricted, high-trust environments inside major cloud ecosystems — not as open consumer endpoints. Critical institutions will want capabilities delivered inside tightly controlled cloud or sovereign environments.

That benefits hyperscalers in two ways. Frontier-model customers need secure training and inference capacity. And critical institutions will pay a premium for secure, compliant deployment. This is a favorable backdrop for AWS, Google Cloud, Microsoft Azure, and select AI infrastructure names.

Financial Institutions

Banks are not just victims in this story. They are forced buyers of a new generation of AI-assisted defense. The emergency meeting signals that the official U.S. view has shifted from generic AI risk awareness to something more specific: frontier AI can materially change cyber threat models in systemically important finance.

In the near term, this means higher compliance and security costs for banks — not better margins. The cleaner investment angle is upstream: vendors, cloud providers, and hardware suppliers that help the banking system harden itself.

The Risk Stack

1. Headline chasing. Markets may overprice anything with "AI security" in the story. Capability demonstration is not immediate monetization. Not every cybersecurity company becomes a winner because one frontier model crossed a threshold.

2. Regulatory tightening. If governments decide offensive-capable frontier models require licensing, access controls, or export restrictions, the revenue path for some AI products may narrow even as their strategic value rises. Anthropic's own restricted rollout shows the industry is already moving in this direction voluntarily.

3. Concentration risk. If a small number of frontier labs and hyperscalers become quasi-sovereign AI choke points, markets may eventually apply political-discount logic, not just growth logic. That would complicate valuation even for companies with genuine strategic importance.

4. Narrative vs. earnings gap. Anthropic is private. The public-market beneficiaries (cybersecurity, semiconductors, cloud) will benefit from increased demand, but the magnitude and timing of that spending increase is not yet quantified in earnings guidance. Investors need to separate "this is directionally right" from "this is already priced in."

Bottom Line

Anthropic's Mythos moment turns frontier AI from a productivity story into a systemic-risk story. The meeting with Bessent, Powell, and bank leaders was not theater — it was a public signal that U.S. authorities believe cyber capability at the frontier AI layer is now relevant to core financial stability.

The cleanest investment conclusion is not to chase fear. It is to follow the forced spending. If AI can identify and exploit vulnerabilities at unprecedented speed, the world will spend more on defense, secure infrastructure, compute, and sovereign deployment. Anthropic itself may become one of the most strategically valuable private companies on earth. But the more actionable public-market opportunity sits one layer below: cybersecurity leaders, compute suppliers, cloud infrastructure, and the advanced semiconductor stack underneath all of it.

AI is not only an efficiency engine anymore. It is becoming a strategic weapon and a defensive necessity at the same time. That duality will define the next phase of the AI investment cycle.

About ANTHROPIC

Share your analysis

Keep it data-driven. No investment advice.

- Keep it data-driven and respectful

- No investment advice (buy / sell / hold)

- No spam, promotion, or solicitation

- No profanity or offensive content

- Violations are automatically removed

Data: Financial Modeling Prep, Alpha Vantage, CoinGecko

NOT investment advice. Always do your own research.